1. The Project

A friend of mine who was concerned about the lack of AI regulation came to me with an idea for a competition he was entering. He wanted to create a tool to lobby candidates standing in future UK general elections, pushing them to commit to stronger AI regulation. He had never done a digital project before and came to me for advice on the best way to approach it. The project looked fun and was something I genuinely believed in, so I told him I would take on the digital side entirely while he focused on the campaign and specifics.

This was one of the most enjoyable projects I have worked on. Building something both of us believed in made the whole process feel meaningful beyond just the technical challenge. Watching it evolve from early rough iterations into something polished and production-ready was genuinely satisfying, and the fine-tuning process gave me a real sense of ownership over the finished product. My friend and I kept a steady dialogue throughout and we are both very proud of what we created.

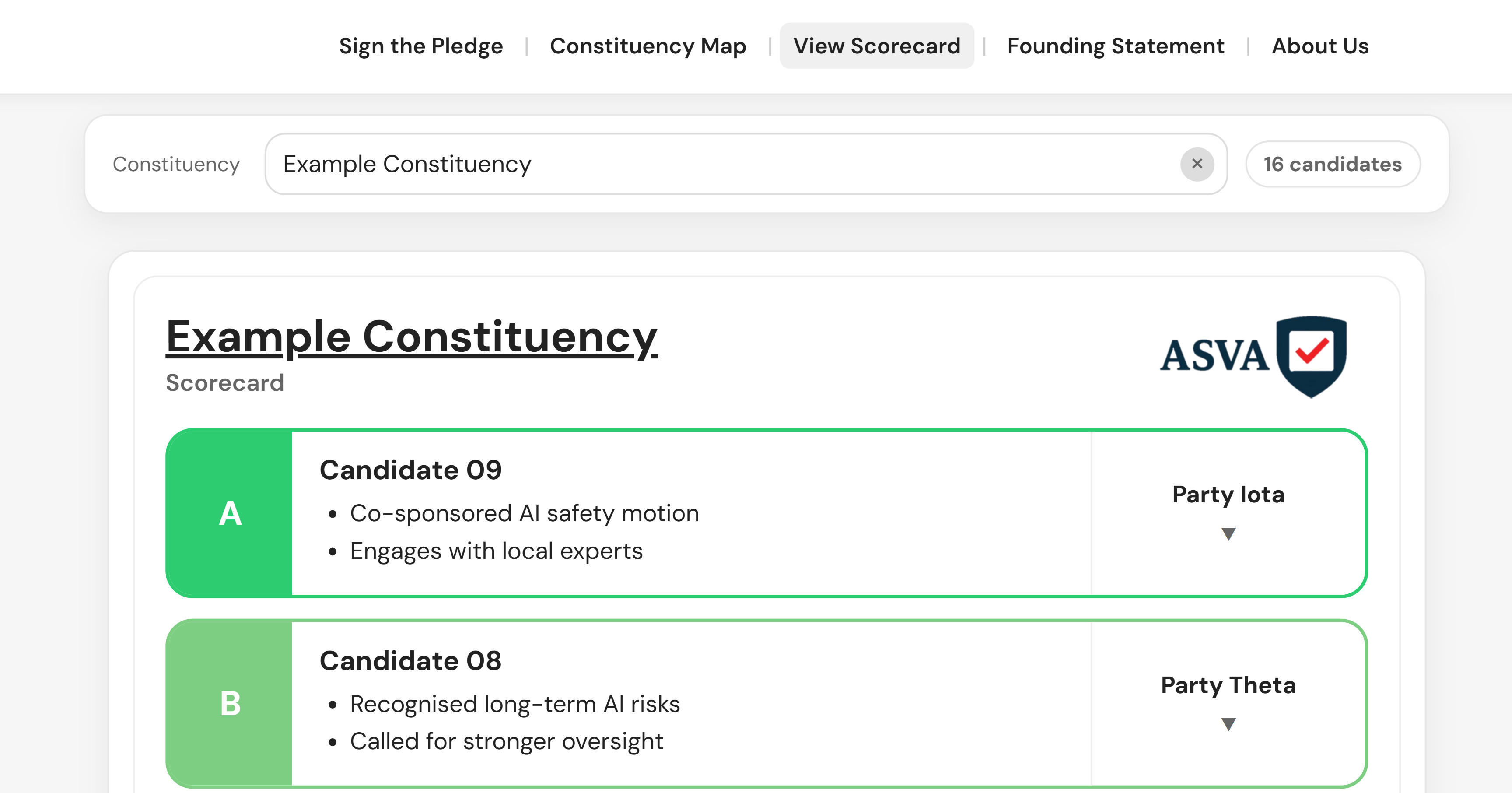

A demo using Example Constituency data is available at the current version. An earlier iteration shows how the interface developed over time.

2. What It Does

The ASVA Scorecard is a full-stack web application that allows voters across all 650 UK parliamentary constituencies to search for their local candidates and see how they have been rated on AI governance issues. Key features include:

- A candidate scorecard with ASVA safety grades (A through F) per MP

- An interactive hex cartogram map of all UK constituencies, switchable between a Candidate view showing ASVA grades and a Member view showing pledge sign-up density

- A membership sign-up page that automatically detects a user's constituency from their postcode

- A live database tracking both candidates and members

- A Founding Statement page with the full pledge text

- Secure serverless backend infrastructure with no exposed credentials

3. Architecture

The project is built across several distinct layers, each chosen to keep the system maintainable, secure, and accessible to non-technical collaborators.

3.1 Database

The database hosts all pledge and membership data. The schema was designed using a star schema — an area of existing expertise — with a carefully structured permissions model so that public-facing queries can only access what they need, keeping sensitive data protected behind the appropriate access controls.

3.2 Hosting

The hosting layer manages the website and versioned deployments. Serverless functions handle live data fetching, returning counts and candidate information to the frontend as needed. All secret API keys are stored as secure environment variables, kept entirely out of the codebase.

3.3 Code Repository

The code repository hosts the full source. Key file types include:

- HTML files — define the structure of each page, covering the scorecard, pledge form, constituency map, and founding statement

- JavaScript files — handle all application logic, including fetching data from the backend, detecting a user's constituency from their postcode, dynamically rendering candidate cards, and the interactive map

- JSON files — store structured configuration data, including deployment settings that define how the project is built and how environment bindings are wired up

- Configuration and deployment helper files — manage the build pipeline and connect the frontend to backend functions and environment secrets

3.4 Content Management

A spreadsheet serves as the content management layer for candidate data, published and fetched at build time. This kept data management accessible to non-technical collaborators without needing direct database access. A public postcode API automatically resolves a user's constituency from their postcode on the sign-up page.

4. AI Use in Development

This project made extensive use of AI throughout the build. Early iterations were put together using Claude, with outputs committed directly to the repository. Later iterations were developed using Claude Code inside VS Code, which allowed for a much tighter feedback loop where changes were pushed and deployed automatically.

AI was the primary development tool rather than a supporting one — a deliberate choice to show how someone with a strong analytical and data background can build production-quality software by working effectively with AI.